Measuring AI Agent Autonomy in Practice

AI agents are increasingly being deployed across a wide array of contexts, ranging from simple tasks like email triage to complex scenarios such as cyber espionage. Understanding the spectrum of AI agent autonomy is crucial for ensuring safe deployment, yet there is a surprising lack of empirical data on how these agents are actually used in real-world situations. This article delves into our analysis of millions of human-agent interactions, focusing on the autonomy granted to AI agents, how this autonomy evolves with user experience, the domains in which these agents operate, and the associated risks.

Key Findings

Our analysis revealed several significant insights regarding the autonomy of AI agents:

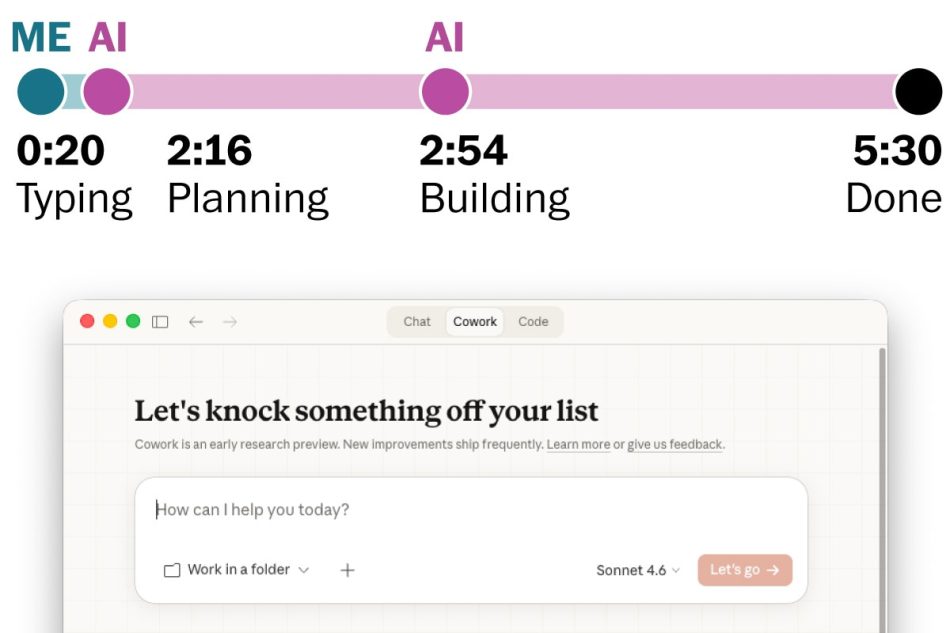

- Increased Autonomy Over Time: Claude Code, our coding agent, is operating autonomously for longer periods. The duration of time that Claude Code works without stopping has nearly doubled in just three months, increasing from under 25 minutes to over 45 minutes.

- Experience Influences Autonomy: As users gain experience with Claude Code, they tend to auto-approve actions more frequently while also interrupting less often. New users utilize full auto-approve in about 20% of sessions, which rises to over 40% as they become more experienced.

- Clarification Pauses: Claude Code pauses for clarification more often than users interrupt it. On complex tasks, the agent requests clarification over twice as frequently as humans initiate stops.

- Risky Domains: While most actions performed by agents in our public API are low-risk and reversible, we observed significant activity in high-stakes areas such as software engineering, healthcare, finance, and cybersecurity.

Methodology

Studying AI agents presents unique challenges. A lack of consensus on what constitutes an agent and the rapid evolution of these systems complicates empirical research. Our approach involved defining an agent as an AI system equipped with tools to take actions, such as running code, calling external APIs, and sending messages to other agents.

We developed a collection of metrics utilizing data from both our public API and Claude Code. This dual-source analysis allowed us to balance breadth and depth, enabling us to observe agentic deployments across diverse contexts while also understanding individual agent workflows.

Understanding Agent Autonomy

To measure how long agents operate without human intervention, we tracked the time elapsed between when Claude Code begins working and when it stops. The median duration for agent turns remains stable at around 45 seconds, but the longest turns provide a more revealing insight into the ambitious uses of Claude Code.

Between October 2025 and January 2026, the 99.9th percentile turn duration nearly doubled, indicating a significant increase in the autonomy granted to the agent by experienced users. This trend suggests that as users become more comfortable with the tool, they are willing to grant it greater autonomy.

Challenges in Studying Agents

Empirical study of agents is fraught with difficulties. The lack of a standard definition and the rapid evolution of agent capabilities make it challenging to conduct consistent research. Additionally, model providers often have limited visibility into the architecture of their customers’ agents, complicating the analysis of agentic activity.

Despite these challenges, our study represents a critical step toward understanding how AI agents are deployed and used in practice. We aim to continue refining our methods and sharing our findings as the adoption of AI agents becomes more widespread.

Recommendations

Based on our findings, we recommend the following for model developers, product developers, and policymakers:

- Implement robust post-deployment monitoring infrastructures to ensure effective oversight of AI agents.

- Develop new human-AI interaction paradigms that facilitate better management of autonomy and risk.

- Encourage ongoing research into the real-world applications and implications of AI agents to inform future developments.

Conclusion

Our research provides valuable insights into the autonomy of AI agents and their real-world applications. As AI technology continues to evolve, understanding how agents operate and the risks they pose will be essential for safe and effective deployment.

Frequently Asked Questions

Measuring AI agent autonomy is crucial for understanding how much control users grant to AI systems and ensuring that these systems operate safely in various contexts, especially in high-stakes environments.

As users gain experience with AI agents, they tend to grant more autonomy by auto-approving actions and intervening less frequently, indicating a growing trust in the capabilities of the agent.

While many actions taken by AI agents are low-risk, there is potential for high-stakes errors in domains such as healthcare and cybersecurity, necessitating careful oversight and monitoring.

Note: The insights provided in this article are based on empirical research and aim to contribute to the ongoing dialogue about the safe deployment of AI agents in various contexts.