Schools are using AI counselors to track students’ mental health. Is it safe?

In recent years, schools across the United States have started to implement artificial intelligence (AI) counselors as a means to monitor students’ mental health. These AI tools are designed to provide support and identify students who may be at risk of self-harm or other mental health issues. However, the question remains: is this approach safe and effective?

The Role of AI in Mental Health Monitoring

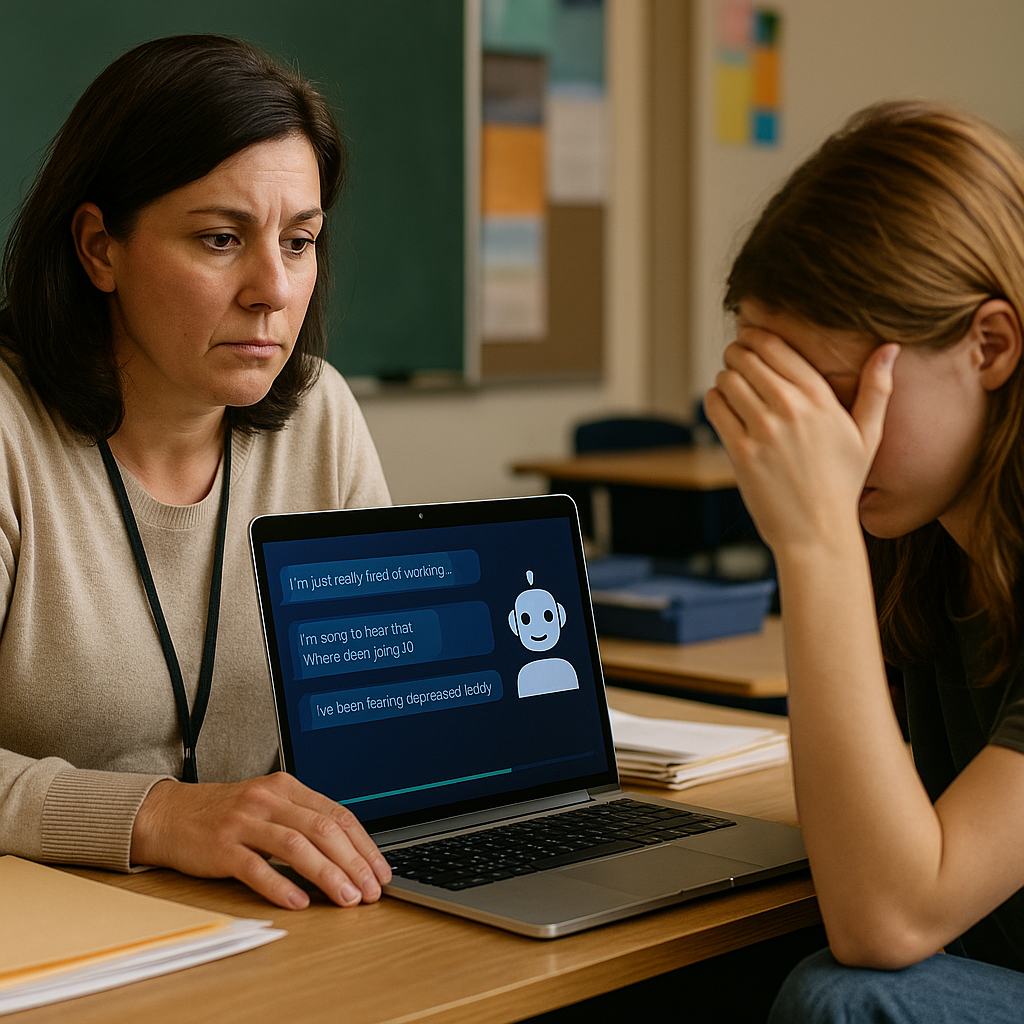

AI-powered platforms, such as Alongside, are being adopted by numerous educational institutions to assist in mental health assessments. These tools analyze students’ interactions and flag any concerning behaviors or language. For instance, a middle school counselor in Putnam County, Florida, received an alert indicating a student might be at risk for self-harm based on their chat inputs. This allowed the counselor to intervene promptly, demonstrating the potential benefits of AI in crisis situations.

Benefits of AI Counseling Tools

AI counseling tools offer several advantages:

- Accessibility: AI counselors are available 24/7, providing students with immediate support when human counselors may not be available.

- Reduced Stigma: Some students may feel more comfortable discussing personal issues with a chatbot rather than a human, reducing the stigma associated with seeking help.

- Resource Efficiency: With many schools facing budget constraints and limited mental health staff, AI can help manage the workload by addressing routine issues, allowing human counselors to focus on more severe cases.

Concerns About AI in Mental Health

Despite the potential benefits, there are significant concerns regarding the use of AI in mental health support:

- Lack of Human Connection: Experts warn that AI cannot replicate the human connection and judgment that a trained counselor provides. Non-verbal cues and emotional nuances are critical in mental health assessments.

- Over-Reliance on Technology: There is a fear that schools may become overly dependent on AI tools, potentially neglecting the need for human intervention in serious cases.

- Emotional Attachment: Some students may form emotional attachments to AI counselors, which could lead to complications in their understanding of real human relationships.

Expert Opinions

Sarah Caliboso-Soto, a licensed clinical social worker, emphasizes the importance of human interaction in mental health care. She notes that while AI can serve as a first line of defense, it should not replace traditional counseling. The subtleties of human communication—such as tone of voice and body language—are crucial in understanding a student’s emotional state, which AI simply cannot capture.

Linda Charmaraman, director of the Youth, Media & Wellbeing Research Lab, points out that for many students, communicating through a chat interface feels more natural than face-to-face conversations. This generational shift towards digital communication may explain the growing acceptance of AI in mental health discussions among younger populations.

Conclusion

As schools increasingly turn to AI to support mental health initiatives, it is essential to strike a balance between technology and human interaction. While AI can provide valuable assistance in identifying at-risk students and offering immediate support, it should complement, rather than replace, the critical role of human counselors. The safety and effectiveness of AI in mental health monitoring depend on careful implementation and ongoing evaluation.

Frequently Asked Questions

AI counselors are digital platforms that use artificial intelligence to monitor and support students’ mental health by analyzing their interactions and providing resources or alerts when concerning behavior is detected.

AI counselors can be effective in identifying potential mental health issues by flagging concerning language or behavior. However, they should not replace human counselors, as they lack the ability to interpret non-verbal cues and provide the nuanced support that trained professionals offer.

Schools can ensure safe use of AI by implementing it as a supplementary tool alongside human counselors, providing training for staff on how to interpret AI alerts, and regularly evaluating the effectiveness and ethical implications of the technology.

Note: The integration of AI in mental health monitoring is a developing field, and ongoing research is necessary to fully understand its implications and effectiveness.