Large genome model: Open source AI trained on trillions of bases

The advent of artificial intelligence (AI) has revolutionized various fields, including genomics. One of the most significant advancements in this area is the development of large genome models that leverage open-source AI trained on trillions of bases. This article explores the implications, methodologies, and potential applications of such models in the field of genomics.

Understanding Large Genome Models

Large genome models are sophisticated AI systems designed to analyze and interpret vast amounts of genetic data. These models utilize deep learning techniques to identify patterns, make predictions, and derive insights from genomic sequences. By training on extensive datasets, they can achieve remarkable accuracy in tasks such as variant calling, gene prediction, and understanding complex genetic interactions.

The Significance of Open Source

Open-source software has transformed the landscape of technology by promoting collaboration and transparency. In the context of genome modeling, open-source AI allows researchers from around the world to access, modify, and improve the algorithms used in genomic analysis. This democratization of technology fosters innovation and accelerates advancements in the field.

Benefits of Open Source in Genomics

- Collaboration: Researchers can work together, sharing insights and improvements to enhance model performance.

- Transparency: Open-source models allow for scrutiny and validation of methodologies, ensuring scientific rigor.

- Accessibility: Smaller research institutions and independent scientists can leverage powerful tools without the burden of licensing fees.

Training on Trillions of Bases

One of the defining features of large genome models is their training on extensive genomic datasets. By utilizing trillions of bases from diverse sources, these models can learn complex biological patterns. The training process involves feeding the AI vast amounts of genomic data, which it uses to identify correlations and make predictions about genetic traits and diseases.

Data Sources for Training

The data used to train these models comes from various genomic databases, including:

- Public Genomic Databases: Repositories such as the Genome Aggregation Database (gnomAD) and The Cancer Genome Atlas (TCGA) provide a wealth of genomic information.

- Clinical Genomic Data: Data from hospitals and research institutions contribute to a more comprehensive understanding of genetic variations in different populations.

- Environmental Genomics: Information about how environmental factors influence genetic expression is also incorporated into training datasets.

Applications of Large Genome Models

Large genome models trained on extensive datasets have numerous applications in genomics and medicine. Some of the most notable include:

1. Disease Prediction and Prevention

By analyzing genetic variants associated with diseases, large genome models can help identify individuals at risk for certain conditions. This predictive capability is invaluable for preventive medicine, allowing for early interventions and personalized treatment plans.

2. Drug Development

Understanding the genetic basis of diseases can lead to the discovery of new drug targets. Large genome models can assist in identifying which genetic variations may respond to specific treatments, thereby streamlining the drug development process.

3. Personalized Medicine

With insights derived from large genome models, healthcare providers can tailor treatments to individual patients based on their genetic makeup. This approach enhances the efficacy of treatments and minimizes adverse effects.

Challenges and Considerations

Despite the promising potential of large genome models, several challenges must be addressed:

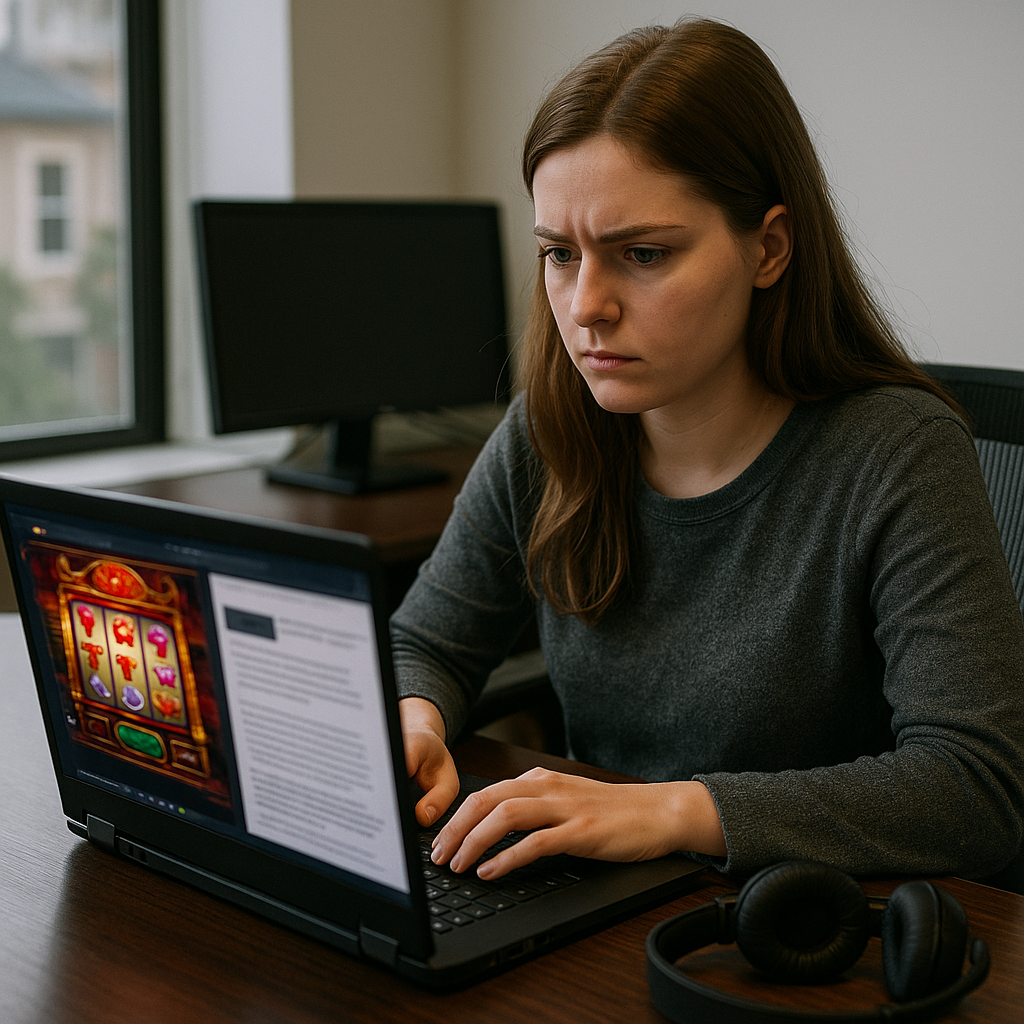

1. Data Privacy

As genomic data is highly sensitive, ensuring the privacy and security of individuals’ genetic information is paramount. Researchers must implement stringent data protection measures to safeguard personal information.

2. Model Bias

If the training data is not representative of diverse populations, the model may exhibit biases, leading to inaccurate predictions for underrepresented groups. It is crucial to ensure that datasets are inclusive and diverse.

3. Interpretability

Understanding how these large models arrive at their conclusions can be challenging. Enhancing the interpretability of AI models is essential for gaining trust from healthcare professionals and patients alike.

Future Directions

The future of large genome models is promising, with ongoing research aimed at improving their accuracy and applicability. Advances in computational power, data collection methods, and machine learning techniques will continue to enhance the capabilities of these models. Furthermore, collaborative efforts in the open-source community will drive innovation and lead to new breakthroughs in genomics.

Frequently Asked Questions

A large genome model is an AI system designed to analyze and interpret extensive genomic data, identifying patterns and making predictions related to genetic sequences.

Open-source allows researchers to collaborate, share insights, and improve algorithms used in genomic analysis, promoting innovation and accessibility in the field.

Large genome models are used in disease prediction, drug development, and personalized medicine, enhancing the understanding of genetic factors in health and disease.

Note: The advancements in large genome models represent a significant leap forward in the field of genomics, promising to enhance our understanding of genetics and improve healthcare outcomes.